Hi,

Hope you have read my earlier post on recovering vCenter Server from the unexpected Outage in the Datacenter and how to revive vCenter Server and gain access to the inventory properly.

Now lets go little deeper in to another situation and with vSphere 5.5 now a days a normal vSphere environment looks something like this.

vCenter Server 5.5 with latest updates installed on a Virtual Machine with Latest Hardware version 10

Inventory Server on a separate virtual machine with Hardware Version 10

Running Nexus 1000v VDS / VMware VDS for all nature of Traffic including Management, VM, vMotion, iSCSI, Control, Packet and Management (for Nexus 1000v), NFS, FT etc. etc.

ESXi host Management Network is on VDS as well so keep this in mind.

Now lets assume an outage occurred and you were able to connect to the ESXi host and also you were able to trace down the vCenter Server. Oh man, that was pain. I know that feeling, and hence I posted earlier about the available ways on just how to recover vCenter Server from an outage. So lets continue.

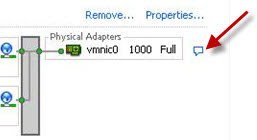

Now if you verify the VM traffic is going through VDS and the vCenter Server VM has one of the dvPortGroups selected. As you restored a standard vSwitch on ESXi host though DCUI and now you have connectivity to the ESXi host using vSphere client.

vCenter Service is not starting due to the reason that the Inventory Service is running on some other virtual machine. Luckily you found that VM and registered the VM on the same host as the vCenter Server.

But still both VMs - vCenter Server and Inventory Server cant connect to each other as both using dvPortGroup of VDS.

Now you have the vSwitch on the ESXi host which you can use temporarily but how to change the settings of the vNIC on the VM.

This is where the trouble starts

You can't edit the Virtual Machine settings as you are connected through vSphere client to the ESXi host

In order to change the vNIC Settings you need to connect using WebClient for Hardware Version 10 Virtual machine but as the vCenter Service is not starting as it depends on the Inventory Service which is running on another Virtual machine so you are now stuck in a loop. Its a chain reaction within which you cant make vCenter Server and Inventory Server talk to each other.

Now by design you can't edit the HW 10 virtual machine settings if not using Web Client.

So here is the trick on how to fix this.

First of all shut down both vCenter Server and Inventory Server virtual machine and remove them from Inventory. Right click the virtual machine and select "Remove from Inventory" option.

Lets assume you have a standard portgroup created on the Standard vSwitch called "Test".

Now you need to open SSH access to the ESXi host and connect through putty or you can use DCUI too.

Go to the virtual machine directory

cd /vmfs/volumes/datastore-name/vcenter-vm/

Now you can use the "vi" command to modify the .vmx file of the vCenter Server virtual machine and also the Inventory Server virtual machine

Go to the line where you see the hardware version - go in to edit mode by pressing a in vi.

Hardware = 10

Change the value from 10 to 8 - save the file :wq! and you will be back at the root prompt of ESXi host.

Now go back to vSphere client and browse the respective datastore one at a time and register both vCenter Server virtual machine and also the Inventory Server virtual machine in to the Inventory.

Now you can Edit the Settings using vSphere Client and select the standard portgroup "Test" for both VMs.

Power on both VMs and verify that the vCenter Service is running.

Once verified you can open WebClient and connect to vCenter Server or you can use the vSphere client as well to connect to the vCenter Server. Make sure you have all the hosts and cluster settings as they were before the outage.

Now you should be back in Business with minimal down time.

Hope you will find this information useful when hit by the limitation of Hardware Version 10.Hopefully this should be addressed in future products where you have the ability to change the settings of the virtual machine without any specific requirement. With ESXi 5.5 U2 you can now edit the setting of the VM with Hardware Version 10 using vSphere client. So update your ESXi host in order to get this benefit and also there are other issues resolved too with this release.

Please share and care !!

Enjoy !!

Hope you have read my earlier post on recovering vCenter Server from the unexpected Outage in the Datacenter and how to revive vCenter Server and gain access to the inventory properly.

Now lets go little deeper in to another situation and with vSphere 5.5 now a days a normal vSphere environment looks something like this.

vCenter Server 5.5 with latest updates installed on a Virtual Machine with Latest Hardware version 10

Inventory Server on a separate virtual machine with Hardware Version 10

Running Nexus 1000v VDS / VMware VDS for all nature of Traffic including Management, VM, vMotion, iSCSI, Control, Packet and Management (for Nexus 1000v), NFS, FT etc. etc.

ESXi host Management Network is on VDS as well so keep this in mind.

Now lets assume an outage occurred and you were able to connect to the ESXi host and also you were able to trace down the vCenter Server. Oh man, that was pain. I know that feeling, and hence I posted earlier about the available ways on just how to recover vCenter Server from an outage. So lets continue.

Now if you verify the VM traffic is going through VDS and the vCenter Server VM has one of the dvPortGroups selected. As you restored a standard vSwitch on ESXi host though DCUI and now you have connectivity to the ESXi host using vSphere client.

vCenter Service is not starting due to the reason that the Inventory Service is running on some other virtual machine. Luckily you found that VM and registered the VM on the same host as the vCenter Server.

But still both VMs - vCenter Server and Inventory Server cant connect to each other as both using dvPortGroup of VDS.

Now you have the vSwitch on the ESXi host which you can use temporarily but how to change the settings of the vNIC on the VM.

This is where the trouble starts

You can't edit the Virtual Machine settings as you are connected through vSphere client to the ESXi host

In order to change the vNIC Settings you need to connect using WebClient for Hardware Version 10 Virtual machine but as the vCenter Service is not starting as it depends on the Inventory Service which is running on another Virtual machine so you are now stuck in a loop. Its a chain reaction within which you cant make vCenter Server and Inventory Server talk to each other.

Now by design you can't edit the HW 10 virtual machine settings if not using Web Client.

So here is the trick on how to fix this.

First of all shut down both vCenter Server and Inventory Server virtual machine and remove them from Inventory. Right click the virtual machine and select "Remove from Inventory" option.

Lets assume you have a standard portgroup created on the Standard vSwitch called "Test".

Now you need to open SSH access to the ESXi host and connect through putty or you can use DCUI too.

Go to the virtual machine directory

cd /vmfs/volumes/datastore-name/vcenter-vm/

Now you can use the "vi" command to modify the .vmx file of the vCenter Server virtual machine and also the Inventory Server virtual machine

Go to the line where you see the hardware version - go in to edit mode by pressing a in vi.

Hardware = 10

Change the value from 10 to 8 - save the file :wq! and you will be back at the root prompt of ESXi host.

Now go back to vSphere client and browse the respective datastore one at a time and register both vCenter Server virtual machine and also the Inventory Server virtual machine in to the Inventory.

Now you can Edit the Settings using vSphere Client and select the standard portgroup "Test" for both VMs.

Power on both VMs and verify that the vCenter Service is running.

Once verified you can open WebClient and connect to vCenter Server or you can use the vSphere client as well to connect to the vCenter Server. Make sure you have all the hosts and cluster settings as they were before the outage.

Now you should be back in Business with minimal down time.

Hope you will find this information useful when hit by the limitation of Hardware Version 10.

Please share and care !!

Enjoy !!